Claude Code Deep Dive Part 4: Why It Uses Markdown Files Instead of Vector DBs

Claude Code's memory system looks simple on purpose. This piece breaks down the tradeoffs behind Markdown memories, Sonnet side-queries, and the decision to avoid vector databases.

Table of Contents

This is Part 4 of our Claude Code Architecture Deep Dive series. Part 1: 5 Hidden Features | Part 2: The 1,421-Line While Loop | Part 3: Context Engineering — 5-Level Compression Pipeline

This article replaces and deepens our earlier analysis, Claude Code’s Memory Is Simpler Than You Think. The original focused on limitations. This one focuses on why — the first-principles tradeoffs behind every design choice.

The Core Principle: Only Record What Cannot Be Derived

This single constraint governs every decision in Claude Code’s memory system:

Don’t save code patterns — read the current code. Don’t save git history — run

git log. Don’t save file paths — glob the project. Don’t save past bug fixes — they’re in commits.

This isn’t about saving storage. It’s about preventing memory drift.

If a memory says “auth module lives in src/auth/”, one refactor makes that memory a lie. But the model doesn’t know it’s a lie — it trusts specific references by default. A stale memory is worse than no memory at all, because the model acts on it with confidence.

Code is self-describing. The source of truth is always the current state of the project, not a snapshot from three weeks ago. Memory should store meta-information — who the user is, what they prefer, what decisions were made and why — not facts that the codebase already expresses.

Four Types, Closed Taxonomy

Claude Code enforces exactly four memory types. Not tags. Not categories. Four types with hard boundaries:

| Type | What to Store | Example |

|---|---|---|

| user | Identity, preferences, expertise | “Data scientist, focused on observability” |

| feedback | Behavioral corrections AND confirmations | “Don’t summarize after code changes — user reads diffs” |

| project | Decisions, deadlines, stakeholder context | “Merge freeze after 2026-03-05 for mobile release” |

| reference | Pointers to external systems | “Pipeline bugs tracked in Linear INGEST project” |

Why closed taxonomy beats open tagging: Free-form tags cause label explosion. A model tagging memories freely might produce “coding-style”, “code-style”, “style-preference”, “formatting” — four labels for the same concept. Closed taxonomy forces an explicit semantic choice. Each type has different storage structure (feedback requires Why + How to apply fields) and different retrieval behavior. The constraint buys clarity.

Why Positive Feedback Matters More Than Corrections

The feedback type stores both failures AND successes. The source code explains why:

“If you only save corrections, you will avoid past mistakes but drift away from approaches the user has already validated, and may grow overly cautious.”

Imagine the user says “this code style is great, keep doing this.” If you don’t save that, next session the model might “improve” the style — moving away from what the user explicitly liked. Positive feedback anchors the model to known-good patterns. Without anchors, corrections alone push the model toward progressively safer (blander) output.

Project Type: Relative Dates Kill You

When a user says “merge freeze after Thursday”, the memory must store “merge freeze after 2026-03-05.” A memory read three weeks later has no idea what “Thursday” meant. This seems obvious, but it’s an explicit rule in the source code because models default to storing user language verbatim.

Why Sonnet Side-Query Instead of Vector Embeddings

This is the design choice that draws the most criticism. Claude Code uses a live LLM call (Sonnet) to pick relevant memories instead of vector similarity search. Here’s the actual tradeoff:

How it works:

flowchart LR

Query["User query"] --> Scan["Scan memory dir\nRead frontmatter only\nMax 200 files"]

Scan --> Manifest["Format manifest\ntype + filename + timestamp\n+ description"]

Manifest --> Sonnet["Sonnet side-query\n~250ms, 256 tokens\nSelect top 5"]

Sonnet --> Filter["Deduplicate\nRemove already-surfaced"]

Filter --> Inject["Inject as system-reminder\nWith freshness warning"]

Sonnet reads descriptions (not full content), evaluates semantic relevance, and returns up to 5 filenames. The call costs ~250ms and 256 output tokens.

Why this over vector embeddings:

| Dimension | Sonnet Side-Query | Vector Embeddings |

|---|---|---|

| Semantic depth | Full language understanding — “deployment” matches “CI/CD” | Cosine similarity — good but shallow |

| Infrastructure | Zero — one API call | Requires embedding model + vector store |

| Transparency | Can inspect WHY a memory was selected | Opaque similarity scores |

| Cost per query | ~250ms + 256 tokens (shared prompt cache) | Embedding call + search latency |

| Scaling | Degrades past ~200 files | Scales to millions |

The tradeoff is deliberate: for a session-based CLI tool where users typically have 20-100 memories, Sonnet’s semantic understanding beats vector search’s scale. The 250ms latency is hidden entirely through async prefetch — the search runs in parallel while the model generates its response. For the user, memory recall is “free.”

The 5-File Cap: Constraint as Design

Why limit to 5 memories when a user might have 200?

This is not a technical limitation. It’s a behavioral nudge. If the system scaled to inject 50 memories, users would never clean up stale ones. The 5-file cap pushes users to write better descriptions (so the right memories get selected) and consolidate outdated entries (so slots aren’t wasted on stale info).

Design principle: constraints that change user behavior beat constraints that scale infrastructure.

Background Extraction: The Invisible Agent

Claude Code doesn’t just save memories when you say /remember. After every conversation turn where the main agent stops (no more tool calls), a forked background agent runs to extract memorable information.

Key design details:

- Mutual exclusion: If the main agent already wrote a memory in this turn, the extractor skips. No duplicate memories from the same conversation.

- Trailing runs: If extraction is still running when the next turn ends, the new request queues as

pendingContext. When the current extraction finishes, it picks up the pending work. No concurrent writes to the memory directory. - 5-turn hard deadline: The extractor gets at most 5 tool-call turns. Efficiency over completeness.

- Minimal permissions: Read/Grep/Glob unlimited. Write only to the memory directory. Cannot modify project files, execute code, or call external services.

- Shared prompt cache: The forked agent reuses the parent’s cached system prompt — near-zero additional token overhead.

The execution strategy is prescribed in the prompt: “Turn 1: parallel reads of all existing memories. Turn 2: parallel writes of new memories.” Two turns for the common case. The 5-turn budget handles edge cases.

Trust but Verify: The Eval That Proved It

The most impactful section in Claude Code’s memory prompt is TRUSTING_RECALL_SECTION:

“A memory that names a specific function, file, or flag is a claim that it existed when the memory was written. It may have been renamed, removed, or never merged.”

The rule: before acting on a memory that references a file path, verify the file exists (Glob). Before trusting a function name, confirm it’s still there (Grep).

This section’s value was proven empirically: without it, eval pass rate was 0/2. With it, 3/3. Models default to trusting specific references in memory. They’ll confidently say “as stored in memory, the auth module is at src/auth/” — even when that path was renamed weeks ago. The verification requirement breaks this default behavior.

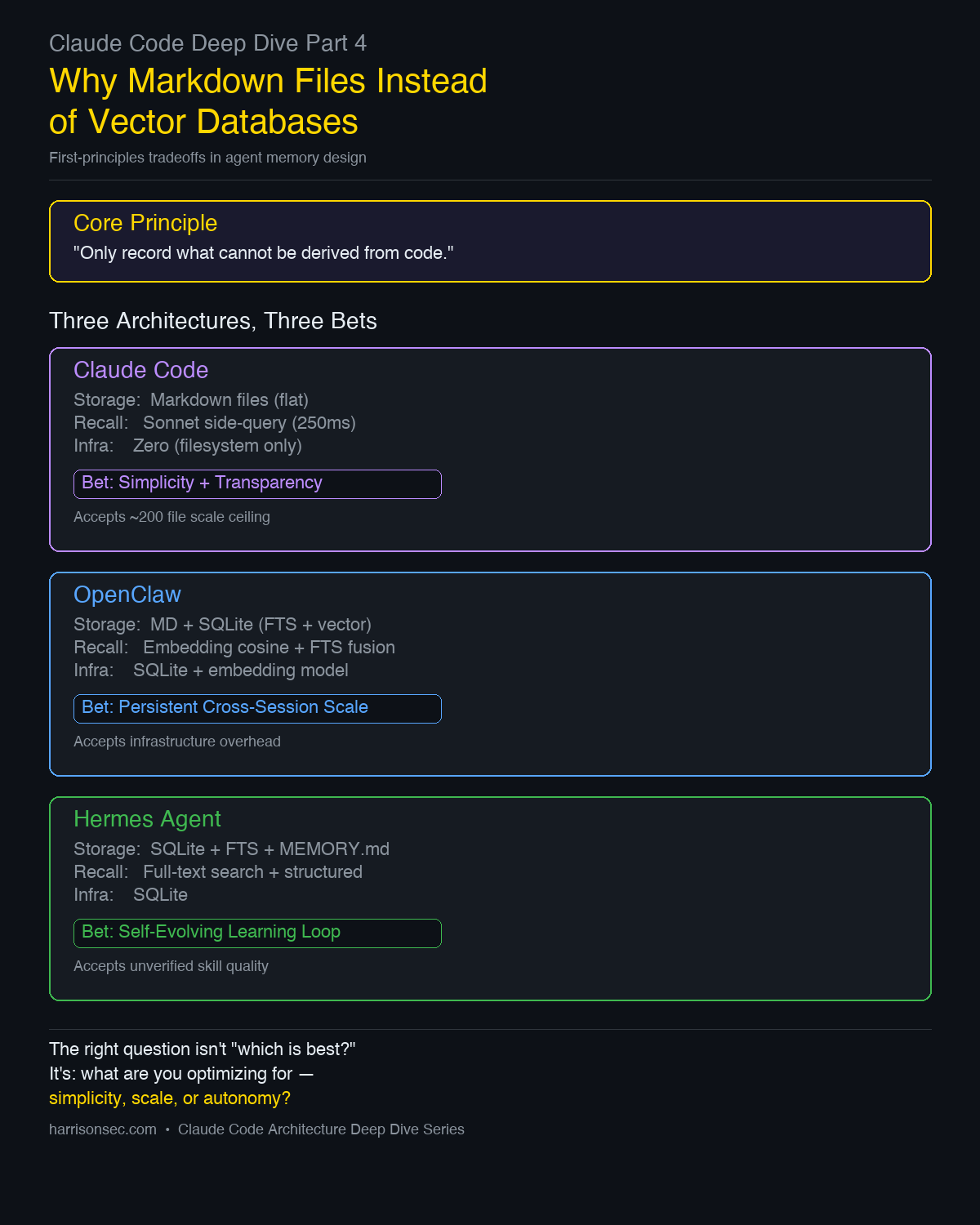

Three Architectures, Three Tradeoffs

This is not a ranking. I’m using OpenClaw and Hermes as contrasts because they represent the two obvious alternative bets: scale and autonomy. Claude Code, OpenClaw, and Hermes Agent made different choices for different deployment models.

| Dimension | Claude Code | OpenClaw | Hermes Agent |

|---|---|---|---|

| Storage | Markdown files (flat) | MD + SQLite (FTS + vector) | SQLite + FTS + MEMORY.md |

| Recall | Sonnet side-query (semantic) | Embedding cosine + FTS fusion | Full-text search + structured queries |

| Infrastructure | Zero (filesystem only) | SQLite + embedding model | SQLite |

| Transparency | Full (plain text, human-readable) | Partial (vector scores opaque) | Partial |

| Learning loop | None (static after write) | None | Self-evolving (auto-generates skills) |

| Session model | Session-based, stateless between sessions | Persistent, cross-session | Persistent, self-improving |

| Scale ceiling | ~200 files by design | Scales with SQLite | Scales with SQLite |

Claude Code’s Bet

Optimize for zero infrastructure and full transparency. Accept a scale ceiling.

For a CLI tool that runs on a developer’s laptop, requiring SQLite or an embedding service is friction. Plain Markdown files are human-readable, git-trackable, and editable with any text editor. The 200-file ceiling is intentional — if you need more, you should be consolidating, not scaling.

When this breaks: Teams with hundreds of shared memories. Long-running projects where memory accumulation outpaces cleanup. Multi-user scenarios where memory needs to be queried across team members.

OpenClaw’s Bet

Accept infrastructure overhead for persistent cross-session scale.

OpenClaw stores memories in SQLite with both full-text search and vector embeddings. This enables fuzzy semantic matching across thousands of memories, weighted fusion of multiple retrieval signals, and persistent state that survives across sessions indefinitely.

When this breaks: Setup complexity. Users must configure embedding models. Vector similarity scores are opaque — when the wrong memory is recalled, debugging why is harder than inspecting a Sonnet side-query.

Hermes Agent’s Bet

Accept complexity for a self-evolving learning loop.

Hermes doesn’t just store memories — it generates skills from completed tasks. After a complex task (5+ tool calls), the agent distills the entire process into a structured skill document. Next time it encounters a similar task, it loads the skill instead of solving from scratch. Skills self-iterate: if the agent finds a better approach during execution, it updates the skill automatically.

When this breaks: Skill quality is unverified. A bad skill propagated through the learning loop compounds errors. The self-evolving mechanism needs guardrails that don’t exist yet — there’s no eval framework for auto-generated skills.

The Right Choice Depends on Your Deployment Model

Session-based, single user, zero setup → Claude Code's approach

Persistent, multi-user, cross-session → OpenClaw's approach

Autonomous, self-improving, research → Hermes's approach

There is no universal “best.” The first-principles question is: what are you optimizing for — simplicity, scale, or autonomy?

What This Teaches About Agent Design

Three principles that transfer beyond memory systems:

Constraints that change user behavior > constraints that scale infrastructure. The 5-file cap is more effective than unlimited vector search, because it forces better memory hygiene. Don’t build capacity for a mess — design incentives for cleanliness.

Eval data beats intuition for prompt engineering. The trust-verification section wasn’t added because someone thought it was a good idea. It was added because evals went from 0/2 to 3/3. If you can’t measure it, you’re guessing.

Use the model’s own reasoning for retrieval when latency allows. Sonnet understanding “deployment” relates to “CI/CD” is something no keyword match or embedding similarity can reliably do. When your retrieval budget allows a model call, the quality ceiling is higher than any static index.

Previous: Part 3: Context Engineering — 5-Level Compression Pipeline | Part 2: The 1,421-Line While Loop | Part 1: 5 Hidden Features

See also: Claude Code + Codex: Two Brains for how dual-AI workflows complement the memory system.

Comments

This space is waiting for your voice.

Comments will be supported shortly. Stay connected for updates!

This section will display user comments from various platforms like X, Reddit, YouTube, and more. Comments will be curated for quality and relevance.

Have questions? Reach out through:

Want to see your comment featured? Mention us on X or tag us on Reddit.