Go's Concurrency Is About Structure, Not Speed: chan and context as Lifecycle Primitives

After a few years writing production Go, I stopped thinking of chan as a data pipe and context as a parameter. They're both lifecycle primitives — chan draws the boundary of 'how many alive', context draws the boundary of 'when to die'. Here's why that mental model changes the code you write.

Table of Contents

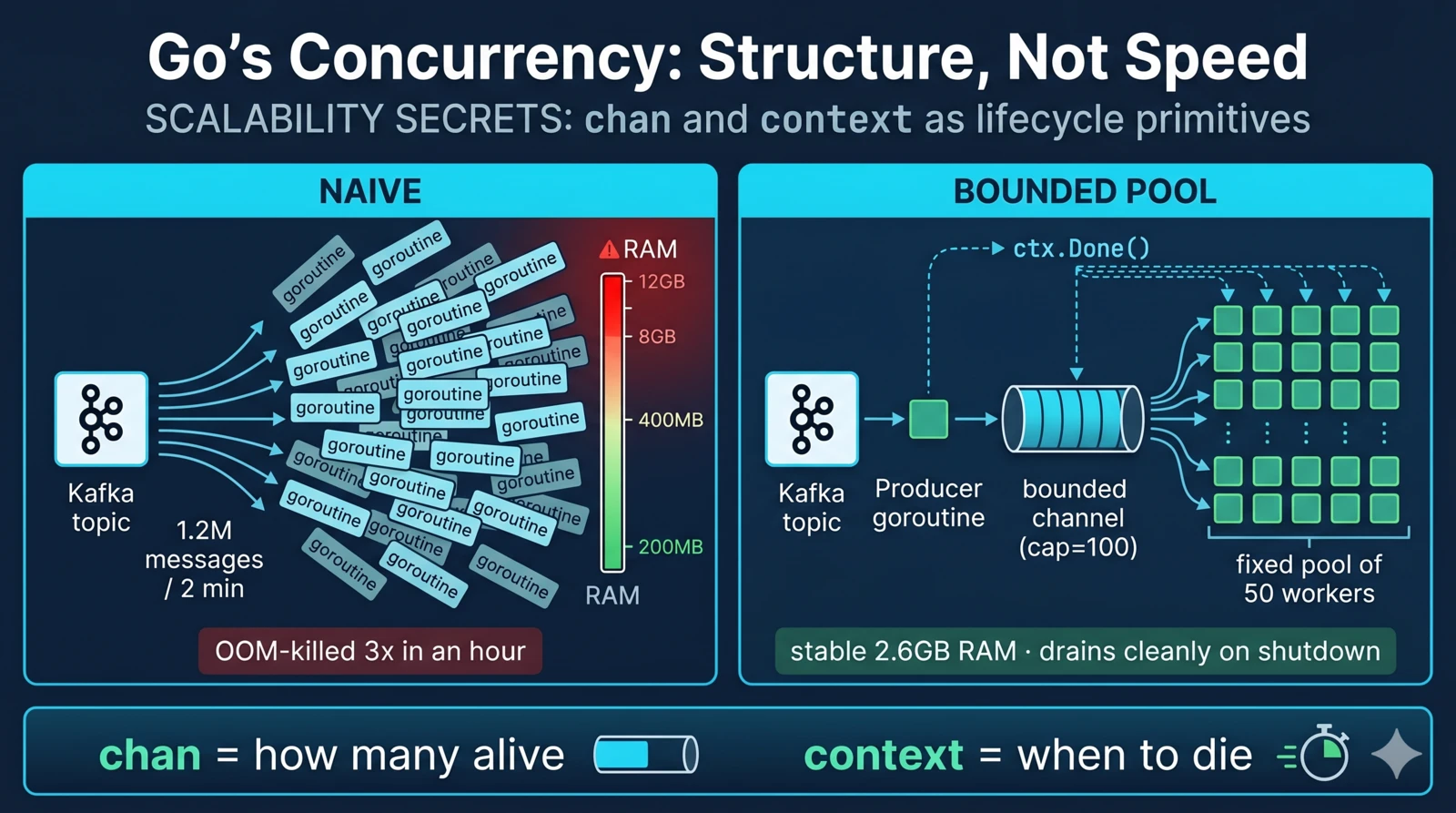

For a while, I thought channels were Go’s way of doing message passing. Something like Erlang processes or actors, except with a simpler syntax. That understanding is fine if you’re writing tutorials. It is not fine when you’ve just OOM-killed a pod for the third time in an hour because your worker pool wasn’t really a pool.

The moment it clicked for me was during a production incident. A Kafka consumer service had been humming along for months at about 1,000 messages per second. Then an upstream team replayed twelve hours of events into the topic at once — roughly 1.2 million messages in two minutes.

The consumer code looked like this, more or less:

for msg := range kafkaMessages {

go process(msg) // one goroutine per message, fire and forget

}

Here’s what the runtime tried to do: spawn 1.2 million goroutines as fast as it could. It did. Heap climbed from 200 MB to 12 GB in about forty seconds. GC pauses went from 2 ms to 800 ms. The pod got OOM-killed. Kubernetes restarted it. On restart, it re-read the uncommitted offsets. Repeat. It took forty minutes and manual producer-side rate limiting upstream before the system would stay up.

The bug wasn’t Kafka. It wasn’t Go. It was the mental model — treating goroutines as “free” and treating channels as “a way to move data between them.” Goroutines are not free under load. And channels are not pipes.

tl;dr —

chanandcontextaren’t just concurrency utilities. They’re the two primitives Go gives you for drawing the boundaries of aliveness in your program.chanbounds how many things are alive at once (backpressure, ownership).contextbounds when they stop being alive (cancellation, deadline). Use them as the skeleton of your design, not as implementation details bolted on at the end.

The Bounded Pool Fix

The fix for the Kafka disaster is the classic bounded worker pool. The shape looks like this:

flowchart LR

Kafka[(Kafka topic)] --> Producer["Producer goroutine

reads one at a time"]

Producer -->|"bounded channel

capacity N"| Jobs{{"jobs chan"}}

Jobs --> W1["Worker 1"]

Jobs --> W2["Worker 2"]

Jobs --> W3["Worker ..."]

Jobs --> Wn["Worker M

(fixed count)"]

Ctx[("ctx.Done()

broadcast cancel")] -.-> Producer

Ctx -.-> W1

Ctx -.-> W2

Ctx -.-> W3

Ctx -.-> Wn

classDef clamp fill:#fef5e7,stroke:#b7791f,stroke-width:2px

classDef signal fill:#faf5ff,stroke:#6b46c1,stroke-dasharray:5 5

class Jobs clamp

class Ctx signal

The bounded channel is the concurrency clamp. The context is the kill switch. Neither alone is enough; together they give you a pipeline that drains cleanly under shutdown and refuses to explode under load.

Here it is in full, because the code matters:

func run(ctx context.Context, consumer *kafka.Consumer) error {

const (

workers = 50 // fixed pool

bufferSize = 100 // bounded queue

)

jobs := make(chan Message, bufferSize)

// Spawn fixed workers

var wg sync.WaitGroup

for i := 0; i < workers; i++ {

wg.Add(1)

go func() {

defer wg.Done()

worker(ctx, jobs)

}()

}

// Producer: push into bounded jobs, blocks when full

go func() {

defer close(jobs) // tell workers we're done

for {

msg, err := consumer.ReadMessage(ctx)

if err != nil {

return

}

select {

case jobs <- msg:

// enqueued; producer moves on

case <-ctx.Done():

return

}

}

}()

<-ctx.Done() // wait for shutdown

wg.Wait()

return ctx.Err()

}

func worker(ctx context.Context, jobs <-chan Message) {

for {

select {

case msg, ok := <-jobs:

if !ok {

return // producer closed the channel

}

if err := process(ctx, msg); err != nil {

log.Error(err)

}

case <-ctx.Done():

return

}

}

}

Look at what this code does that the broken version didn’t:

- Fixed number of workers. 50, period. Never more, regardless of input rate.

- Bounded queue. At most 100 in-flight messages between producer and workers. When the queue is full, the producer stops reading from Kafka.

- Backpressure is implicit. The blocking send on

jobs <- msgis the backpressure mechanism. No complicated flow control needed. The channel is the mechanism. - Cancellation is wired everywhere.

ctx.Done()in producer, worker, and consumer.ReadMessage. Any of them dies with the parent.

That’s it. No semaphores. No rate limiters. No backoff. The channel semantics do the whole job. The channel isn’t a pipe; it’s a clamp.

Channels Are Lifecycle Primitives

This is the insight I wish I’d had earlier: a channel isn’t really about data transfer. It’s about ownership and aliveness.

When you send on a channel, you’re transferring ownership of a value from the sender to the receiver. The sender no longer owns it; the receiver does. That’s useful, and it’s the “share memory by communicating” idea you’ve probably read a dozen times.

But the deeper use is backpressure. A channel with capacity N means “at most N things can be in flight between these two points in the program.” When it’s full, the producer has to stop. That stop is the entire backpressure signal — no separate rate limiter, no token bucket, no hand-rolled semaphore. The buffer size is the concurrency bound.

Once you see this, you stop thinking of channels as “fancy queues” and start thinking of them as structural declarations: this is how many things can be happening in this zone of my program. That’s a very different design tool.

Four patterns that fall out

Bounded pool. The Kafka example above. Fixed workers consume from a bounded channel. The channel is the clamp on in-flight work.

Fan-out, fan-in. One producer, N workers, one aggregator. Each stage is a channel. The sizes of those channels are the concurrency limits between stages.

producer → [chan N] → worker pool (M) → [chan N'] → aggregator

Rate-limited writer. Want to batch writes to a slow downstream? One channel in, one goroutine that flushes every 100 items or every 500ms, whichever comes first. The channel is the queue; the goroutine is the flush policy.

Graceful shutdown signal. A chan struct{} closed on shutdown is a broadcast to every goroutine listening. Every place that checks case <-done: gets the signal at the same time, for free.

None of these need mutexes. Mutexes show up when you have shared mutable state that multiple goroutines read and modify together — a cache, a counter, a shared map. That’s different from “multiple goroutines coordinating their lifecycles,” which is what channels are for.

The rule of thumb I use:

- Coordinating goroutines? Channels.

- Sharing a counter or cache?

sync.Mutex,sync.RWMutex, orsync/atomic. - Both? Channels for the outer shape (who’s alive, when to stop), mutex for the inner state (protected data).

Context Is the Other Half

If chan defines “how many alive,” context defines “when to die.” You already know the story if you’ve written any Go: context.Context carries a cancellation signal, an optional deadline, and a shallow bag of request-scoped metadata. It propagates down through function calls, and when it fires, every goroutine holding it is asked to stop.

What I want to emphasize is the pairing.

In the bounded-pool example above, look at where ctx appears:

- In the worker’s

selectloop — so a worker can stop mid-wait on<-jobs. - In the producer’s

select— so the producer can stop mid-wait onjobs <- msg. - In the call to

consumer.ReadMessage(ctx)— so the Kafka read unblocks immediately on shutdown.

Remove any one of those and the shutdown path has a hole. With all three, cancel() on the parent context makes the entire pipeline drain and stop cleanly in under a second. The channel decides the structure; the context decides the termination.

Neither primitive alone is enough. A bounded channel without cancellation will keep processing until its queue drains — which can be minutes for a deep queue. A cancelled context without a bounded channel still lets you create unbounded goroutines between now and the moment everyone notices. You need both.

chandraws the boundaries in space.contextdraws the boundary in time. Together they describe the shape and the lifetime of concurrent work.

What “Structure, Not Speed” Actually Means

Go’s concurrency model is often sold as fast. Sometimes it is. Per-request throughput in well-written Go is solidly middle of the pack — beaten by Rust and C++, comparable to Java and C#. You do not pick Go because it’s fast.

You pick Go because the design of a concurrent program becomes tractable. A senior engineer reading a goroutine-and-channels design understands what’s alive and what bounds it. A junior engineer reading the same code doesn’t have to know about monitors, condition variables, or lock ordering. The shape of the program is visible in the channel declarations.

That’s the “structure” pitch. And it works because the primitives compose:

- Bounded channels compose into pipelines with known concurrency at each stage.

- Contexts compose into a lifetime tree where cancelling any subtree stops everything below it.

- Select statements compose cancellation, timeouts, and channel operations into a single readable switch.

The failure mode — the one that gave me the Kafka outage — is treating these primitives as optional utilities you reach for when a standard pattern doesn’t fit. They aren’t. They’re the first-class design vocabulary of concurrent Go. The moment you’re writing concurrent code without thinking in channels and contexts, you’ve left the paved road.

Small Things That Matter

A few tactical points I’ve learned the expensive way:

- Always document channel ownership. Who closes it? Who sends? Who receives? A closed channel panics on send. A nil channel blocks forever in select. These are easy to reason about if ownership is clear, and confusing if it isn’t. I use comments right at the declaration site:

// jobs: producer sends and closes; workers receive. - Close from the sender, not the receiver. There’s exactly one sender and it owns the lifecycle. Multiple senders? Use a separate

donechannel or async.Once. selectwithdefaultis not backpressure, it’s drop.select { case ch <- x: default: }drops the message if the channel is full. Sometimes that’s what you want (metrics sampling). Often it’s a bug disguised as a performance optimization.- Unbuffered channels are rendezvous, not pipes. An unbuffered send completes the instant a receiver is ready, not before. This is sometimes exactly the synchronization you want (handoff semantics) and sometimes a deadlock waiting to happen.

- Test under load, not just logic. The Kafka incident would have been caught by any realistic load test. Unit tests happily ran the

go process(msg)version and passed. Load is what reveals structural bugs.

The Real Lesson

Three years ago I’d have written “use goroutines for parallelism, channels for communication, context for cancellation” and considered that advice. I don’t think it’s wrong, but it misses the point.

The better framing is: chan and context are the two primitives for drawing boundaries around concurrent work. One draws the boundary of “how many alive.” The other draws “when to die.” Everything else — the pools, the pipelines, the cancellation trees — is built by composing these two.

A design that doesn’t specify those boundaries isn’t really a design. It’s just code that happens to spawn goroutines. Sometimes it works. Sometimes it eats a pod’s memory in forty seconds.

The Kafka incident fixed itself the day we stopped writing go process(msg) and started writing jobs <- msg. The second version is longer. It’s also the version that doesn’t page us at 3 AM.

Related

- Why Go Handles Millions of Connections: User-Space Context Switching, Explained — why spawning goroutines is cheap in the first place. The foundation that lets you do any of this.

- Go Context in Distributed Systems: What Actually Works in Production — the sibling post on context propagation patterns and the

context.Background()trap. - Why Your “Fail-Fast” Strategy is Killing Your Distributed System — a different lens on the same underlying question: what should your program do when the world gets slow?

🎧 More Ways to Consume This Content

I occasionally advise small teams on backend reliability, Go performance, and production AI systems. Learn more: /services

Comments

This space is waiting for your voice.

Comments will be supported shortly. Stay connected for updates!

This section will display user comments from various platforms like X, Reddit, YouTube, and more. Comments will be curated for quality and relevance.

Have questions? Reach out through:

Want to see your comment featured? Mention us on X or tag us on Reddit.