How I Improved an AI Agent from 40% to 60% — With A/B Test Data

Same model, same test cases, 20% better results. 7 out of 8 fixes were pure code, zero LLM cost. Here's exactly what I changed and why it worked.

AI Operator track — production AI agent engineering. Validation loops, tool-call reliability, completion ownership, context engineering, cost forensics, and observability for systems that ship and stay shipped.

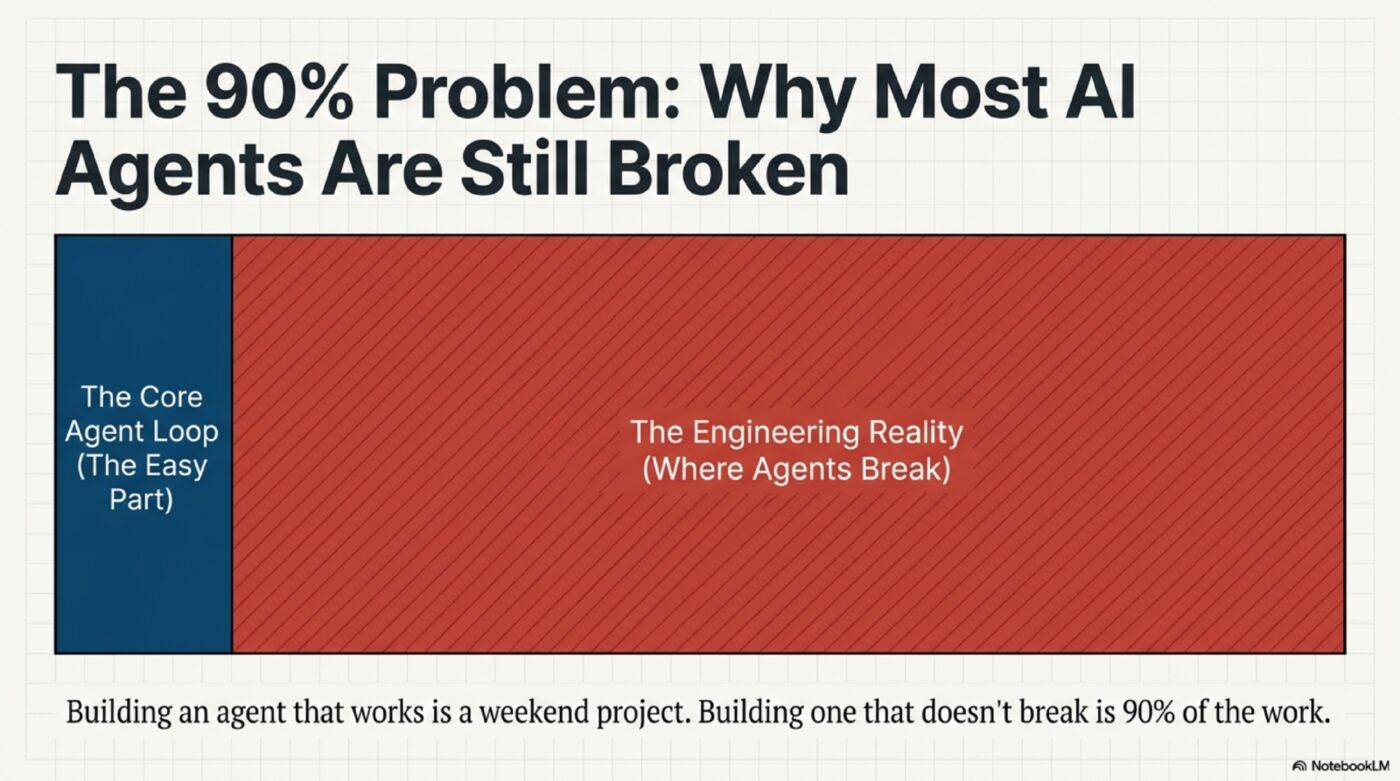

Long-form writing and video on production AI agent engineering — the 90% of work that happens after the model gives you a demo that works.

Topics: validation loops, tool-call completion ownership, context engineering, retry budgets, cost forensics, and observability for systems that ship and stay shipped.

Companion to the Harrison AI Operator YouTube channel. Blog and video are listed below — most recent first.

Same model, same test cases, 20% better results. 7 out of 8 fixes were pure code, zero LLM cost. Here's exactly what I changed and why it worked.

Don't bind to a single AI. Run three in competition, make the final call yourself, and let results judge everyone. The operating model for staying valuable in the AI age.

Your AI agent probably isn't failing because the model is weak. It's failing because you're fixing the wrong layer. The other 90% — context, memory, validation — is where production breaks.

Building an AI agent that works is easy. Building one that keeps working is where most teams fail. Long-form breakdown of the four-layer failure model.

Building an AI agent that works is easy. Building one that doesn't break is 90% of the work. Here's what that 90% actually looks like — from leaked source code and production A/B data.

How to use OpenAI's Codex plugin inside Claude Code — turning Claude Opus and GPT-5.4 into a dual-brain coding system. Setup, commands, rescue workflows, and when each brain wins.

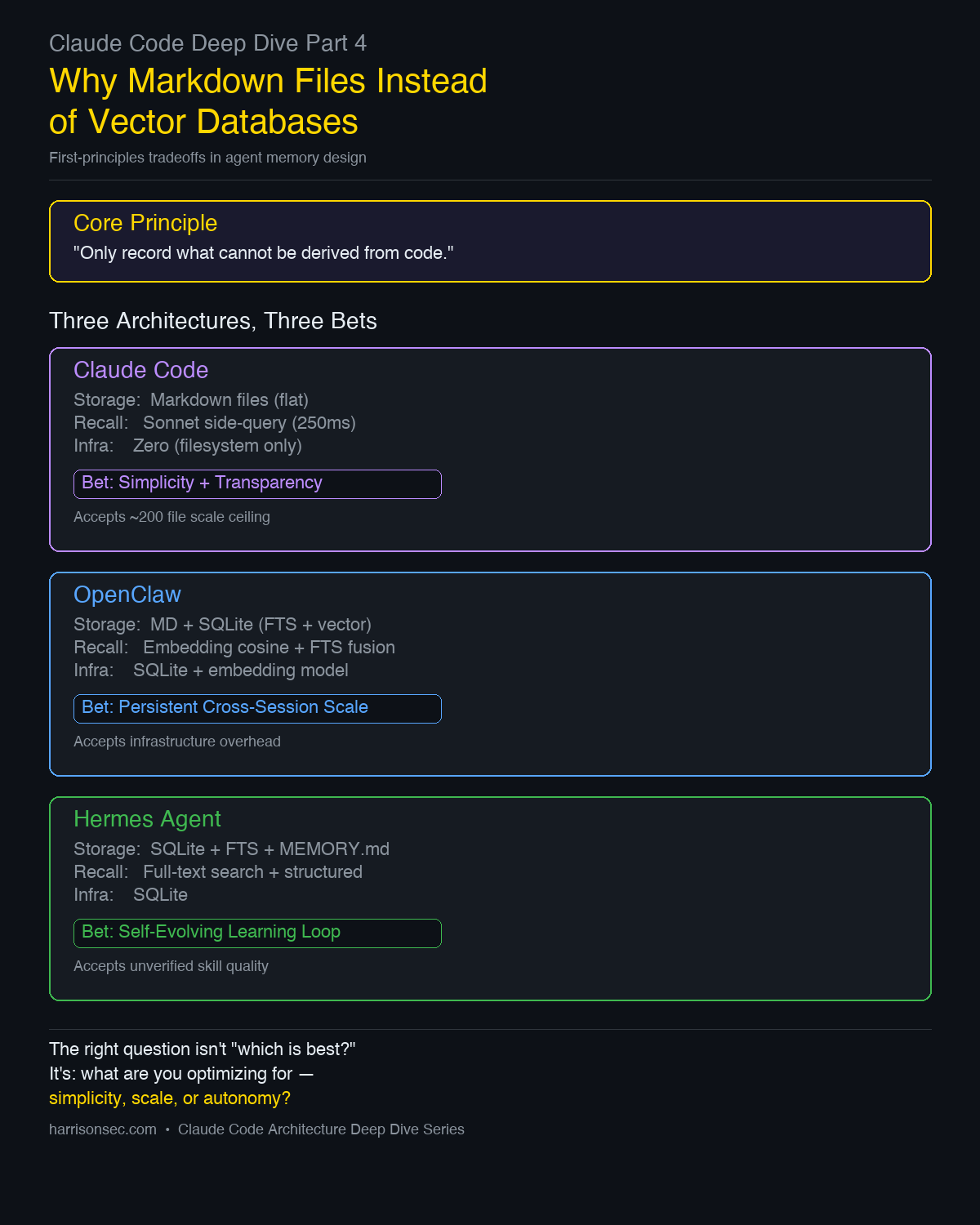

Claude Code's memory system looks simple on purpose. This piece breaks down the tradeoffs behind Markdown memories, Sonnet side-queries, and the decision to avoid vector databases.

Claude Code doesn't just stuff conversations into a 1M-token window. It uses a 5-level compression pipeline, cache-aware edits, and a final autocompact fallback to keep sessions alive.

Inside query.ts — the 1,729-line async generator that is Claude Code's beating heart. 10 steps per iteration, 9 continue points, 4-stage compression, and streaming tool execution. With line numbers.

AI API calls are unlike ordinary RPC: per-request cost varies 100×, tokens and models are first-class, streaming muddies timing, caching changes the pricing. A T-shaped instrumentation architecture — shared stem, specialized arms — that handles tracing, billing, and cost analytics without any of them contaminating the others.

© 2026 HarrisonSec. All rights reserved.